Content is Queen. The ultimate point of any mind map is to use and present information clearly in a way that communicates conclusions that are valid, reliable, and important.

Some examples. Are all of those mind maps floating around showing psychological variables and purporting to illustrate major findings and theories actually using valid information? (Guessing what all people feel like or how they learn and thinking it must be valid since, after all, you are a human, is probably not an indication that you are using highly valid data.) What is the expertise of the individuals who generated the information portrayed in the mind map? Was the information based on empirical studies, well-established theory, the musings of a pop psychology writer, what your Mom taught you, what your best friend thinks, what you saw in a movie? Did you (as a student or casual reader) just read a popular psychology book and accept what that person wrote on how you can be more rich, famous, happy, socially connected, sexy,and thin?

Much attention in mind mapping goes into the “artistic presentation” aspects of the maps, the colors, the rules, the images. And yes, prettier, neater, more original, and more creative maps are probably better received than those that use none of the great tools of visual thinking. But the reality is that the clothing does not make the person nor does the artistry of the map make the content more valid or reliable or important.

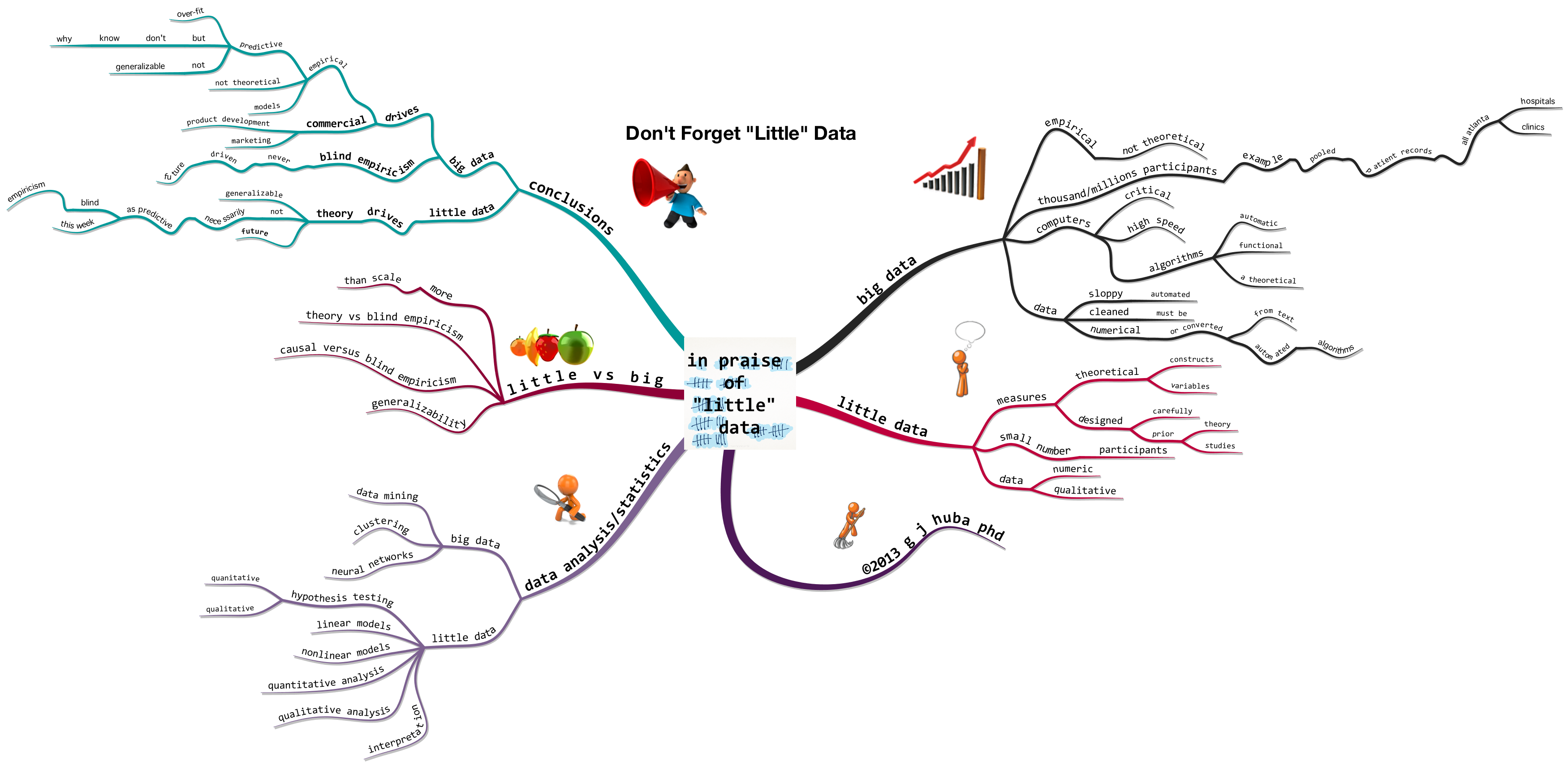

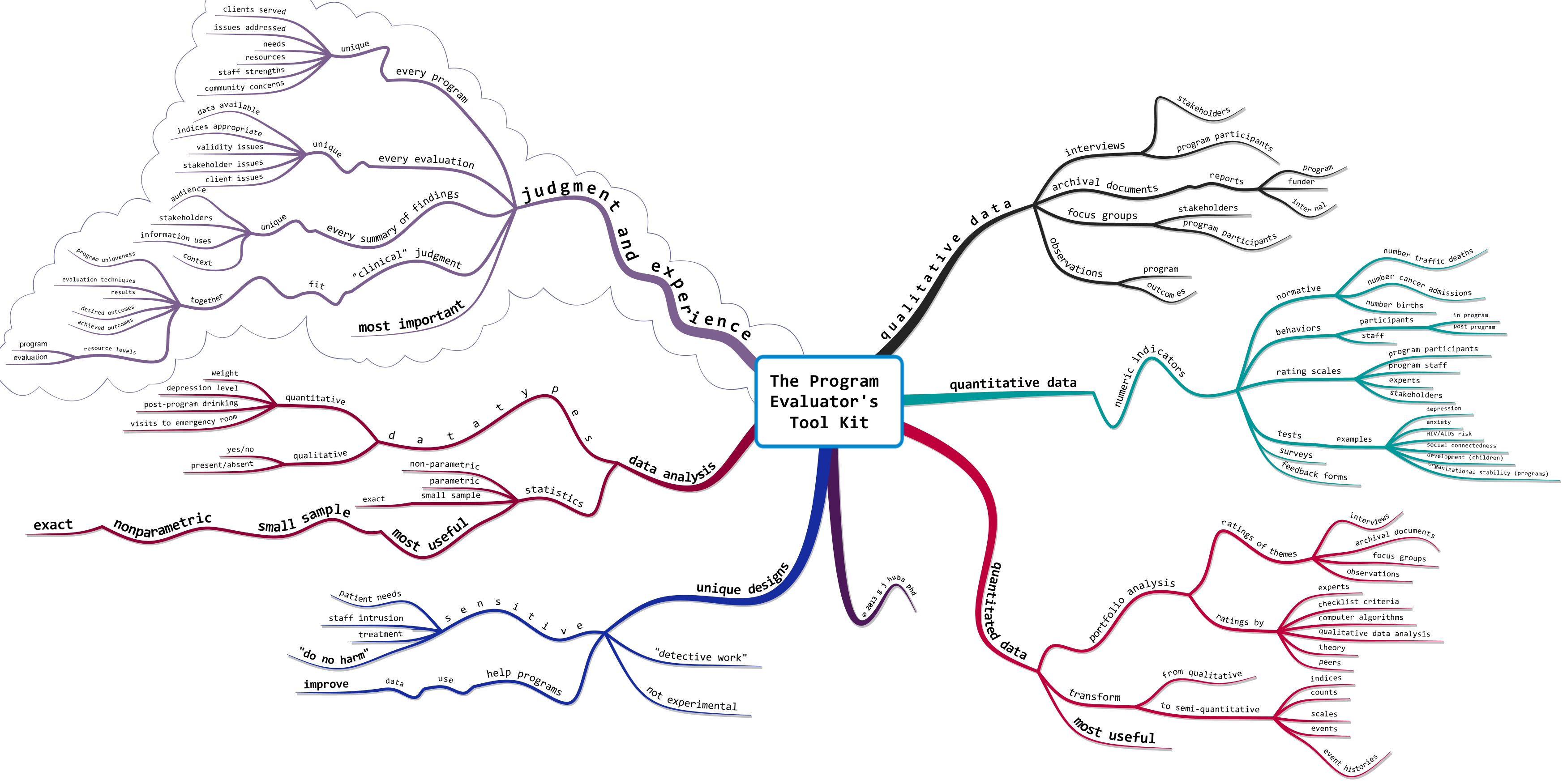

The first mind map below shows some of my thoughts and suggestions about how mind maps should be reviewed by experts in the content areas being addressed if the map will be used for purposes other than personal learning or process documentation or as art. That is, if the point of the map is to present facts, then the purported facts really need to be checked by someone who is an expert in the content area. In most cases, I have no problem with authors being responsible for their own work so long as they clearly state their own expertise levels and where the data for the mind maps originated. I have a big problem with someone who is not a trained mental health professional telling the world how to diagnose depression or ADHD. If the author of the map is not an acknowledged expert presenting her or his own work, then the source and limits of the information in the mind map need to be stated, and in some cases, independently evaluated.

The second mind map is actually just the first one produced in iMindMap exported into the alternative computer program MindNode Pro. Is the first map prettier than the second? Sure seems so to me. Is the first map more valid? No. It contains identical information. Does the first map communicate better than the second? Sure seems so to me.

Keep in mind that the goal of most mind mapping is to present valid, reliable, and important information in way that is easily understood, easily remembered, and easily communicated. Using this criterion the first map is probably significantly better.

The third mind map is identical in content to the two maps just considered but was generated using default options in the program XMIND. The style of the mind map is similar to that of another program (Mindjet AKA MindManager), and is that many argue is the best for presenting information to those in business.

Hopefully by the time you read this, you will have looked carefully at the actual content of the mind map in one or more of the variations. Content is Queen; it is all about the ideas. In the process of mapping, we need to incorporate references to the source of the information displayed. Pretty is good and memorable, but is not more important than the information presented. Content is Queen, although she does look better in a nice dress or business suit.

topics and sub-topics: evaluating mind maps with “expert content” criteria information accurate source stated authoritative recognized cited by others opinion? state adult learning multi-channel non-hierarchical non-linear iterative approximations successive small steps link existing knowledge experience emotions cultural memory consensus neuroscience “catchy” style serious disease disaster war human toll horror funny often many topics “lighter” facts graphic usually images fonts colors this opinion mine g j huba phd @drhubaevaluator © 2012 all rights reserved based professional judgment experience 15 years healthcare professionals researchers physicians nurses psychologists social workers others administrators no science citations but read dr seuss really early lexical mind mapper organic style tony buzan thinking flexible suggestions discussion @biggerplate quick notes iteration 1 imindmap mac written on limited to content purportedly expert reproducible empirical “textbook” peer review? content content content content most important meaningful valid reliable educational goals objectives audience mind maps uniqueness used color fonts non-linearity “artistic” memorable by established experts content visual thinkers other concerns mission critical data good empirical public never present as perfect examples medical safety criminal justice financial mental health reproducibility mind map logic data logic education logic expert knowledge conclusions

topics and sub-topics: evaluating mind maps with “expert content” criteria information accurate source stated authoritative recognized cited by others opinion? state adult learning multi-channel non-hierarchical non-linear iterative approximations successive small steps link existing knowledge experience emotions cultural memory consensus neuroscience “catchy” style serious disease disaster war human toll horror funny often many topics “lighter” facts graphic usually images fonts colors this opinion mine g j huba phd @drhubaevaluator © 2012 all rights reserved based professional judgment experience 15 years healthcare professionals researchers physicians nurses psychologists social workers others administrators no science citations but read dr seuss really early lexical mind mapper organic style tony buzan thinking flexible suggestions discussion @biggerplate quick notes iteration 1 imindmap mac written on limited to content purportedly expert reproducible empirical “textbook” peer review? content content content content most important meaningful valid reliable educational goals objectives audience mind maps uniqueness used color fonts non-linearity “artistic” memorable by established experts content visual thinkers other concerns mission critical data good empirical public never present as perfect examples medical safety criminal justice financial mental health reproducibility mind map logic data logic education logic expert knowledge conclusions

Like this:

Like Loading...